Why Not Everything Is a Skill

If you build AI coding agents, you’ve probably created an AGENTS.md (or CLAUDE.md) file.

It starts small: safety rules, a few workflows, maybe one or two “gotchas”.

Then it grows ...

It absorbs architecture summaries, folder tours, database schemas, deployment notes, and a pile of tool instructions. At the same time, frameworks encourage us to load more and more “skills” into the agent.

The result is predictable: agents get distracted, reach for the wrong tools, insist on legacy technologies, and your inference cost goes up.

What follows is a practical synthesis of the papers and discussions referenced below, translated into an operational framing I find useful: Directives, Capabilities, Tactics, Context.

Key takeaway 1: Creating lots of duplicated information is easy. Even more so with AI at hand. But most of it might already be outdated at the time of writing. Creating the minimal amount of content that serves the purpose is hard.

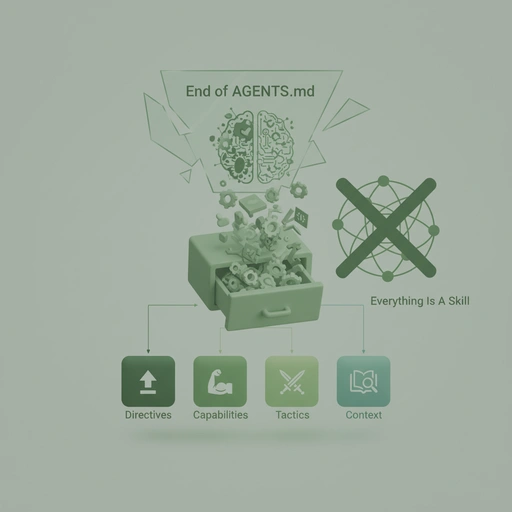

Key takeaway 2: Not all “agent context” is the same thing. Treating a safety law, a tool manual, a learned procedure, and an architecture reference as one blob (“skills”) creates confusion and cost.

The agent-context junk drawer

There are two common failure modes I keep seeing:

-

Static context becomes a magnet. If you tell the agent about something (“we once used X”), it will try to use it, even when it’s irrelevant.

Procedures conflict. When you load many “skills”, you’re often loading incompatible rules-of-thumb. The agent can’t tell what is a hard constraint vs. a soft suggestion.

So the agent does what LLMs do: it produces plausible work — but not reliably aligned work.

Less bloated context is more effective

I’m not claiming the papers below are the final word, but they provide strong directional evidence:

Repository context files can backfire. In Evaluating the Effectiveness of Repository-Level Context Files for Coding Agents (Gloaguen et al., ETH Zurich, 2026), LLM-generated context files decreased task success by ≈3% on average, while developer-written ones gave only a small boost (≈4%). The same setup reported >20% increases in inference cost and execution time when injecting repository context.

Procedural “skills” can help — but only if curated and few. SkillsBench (Li et al., 2026) found curated skills improve outcomes (reported +16.2pp on average), but zero-shot, self-generated skills provide zero or negative benefit. It also observed a “less is more” effect: 2–3 focused skills per task is often optimal; 4+ starts to degrade due to cognitive overhead and conflicts.

-

What a “skill” really is (in research). The GEPA / gskill line of work frames skills as learned, repo-specific procedural heuristics discovered from agent rollouts — not as “a tool integration”.

My practical takeaway from that mix of research and discussion is:

- Use static, always-injected files only for hard behavioral constraints.

- Keep procedural guidance small, proven, and selected per task.

- Keep architecture/reference discoverable on demand.

Do not call everything a “skill”

If a hammer (tool capability), a blueprint (architecture context), and a survival reflex (safety directive) are all called “skills”, you can’t manage the system.

You can’t reliably load the right things. You can’t reason about token budget. And you can’t debug agent behavior because you don’t know which class of instruction caused it.

A practical taxonomy for agent context

From that, I find it useful to separate agent context into four different classes. This framing is intentionally simple. The point is not novelty, but operational clarity.

1) Directives (the global laws)

What it is: non-negotiable rules: safety, mode-switching, approval gates, “never do X”.

Where it lives: AGENTS.md

What it must not become: an architecture wiki.

Example directives:

- “Never run destructive commands.”

- “In planning mode, don’t change implementation artifacts.”

- “Ask for explicit approval before execution.”

2) Capabilities (tool manuals)

What it is: how to operate a specific tool/CLI/integration.

Where it lives:

skills/<tool-name>/(examples:skills/web-search/,skills/agent-browser/)

Example capability content:

- required command formats

- authentication/setup notes

- output parsing conventions

3) Tactics / SOPs (learned skills)

What it is: repo-specific, empirically useful procedures. Think: “When doing X in this codebase, follow this checklist.”

Where it lives:

skills/tactic-<name>/(e.g.,skills/tactic-elixir-parity-check/)skills/learned-<name>/(e.g.,skills/learned-event-mapping-rules/)

Critical rule: load at most 2–3 tactics per task. Anything beyond that tends to become self-conflicting guidance.

Example tactics:

- “Always add a failing test fixture before touching the projection layer.”

- “When changing routing, verify paths via the smoke-test page.”

4) Context (map / reference)

What it is: architecture, domain models, “how the system works”.

Where it lives: docs/, README.md, source code comments.

Rule: never auto-inject this into the system prompt. Let the agent discover it dynamically when needed (search, ripgrep, symbol lookup).

One practical way to clean up an existing repo

If your current AGENTS.md is already a junk drawer, a practical migration can look like this:

- Cut

AGENTS.mddown to directives. Keep only behavior and safety. - Move tool instructions into capability skills. One tool = one skill folder.

- Extract only the proven procedures into tactics. Start with 1–2.

- Move architecture into docs (or leave it where it is) — but stop injecting it.

- Add a “confusion trap” loop: when an agent fails, don’t dump more context. Instead:

- write down the failure mode

- decide whether it needs a directive, a capability clarification, or a new tactic

This keeps your agent context maintainable, due to clarity and simplicity.

What you get from this

- Lower token bloat (and usually lower cost)

- Less distraction from outdated or irrelevant architecture summaries

- More debuggable behavior (you know what kind of instruction you’re changing)

- Better collaboration: teams can review directives/tactics like SOPs, not like random prompt lore

Closing

Keep global directives minimal, load capabilities when needed, apply only a small number of tactics per task, and make context discoverable.

Stop dumping your architecture into AGENTS.md.

Stop calling everything a skill.

References

- Gloaguen et al. (2026): Evaluating the Effectiveness of Repository-Level Context Files for Coding Agents — https://arxiv.org/abs/2602.11988

- SkillsBench (2026): Benchmarking How Well Agent Skills Work Across Diverse Tasks — https://arxiv.org/abs/2602.12670

- Community discussion: https://news.ycombinator.com/item?id=47034087

- “Delete your CLAUDE.md (and your AGENT.md too)” (video): https://www.youtube.com/watch?v=GcNu6wrLTJc

- GEPA blog: Automatically Learning Skills for Coding Agents — https://gepa-ai.github.io/gepa/blog/2026/02/18/automatically-learning-skills-for-coding-agents/