In our fast-paced world with or without AI-assisted development, we often rush from one task to the next. We describe a problem, someone else provides a solution, we verify it works, and we move on. But what happens if we pause for a moment, instead of quickly accepting the first "working" version...

... and ask: "Are you happy with this?" or "Anything else to do?" or "Would you consider this done?"

Short Side-Step: The "Anything Else?" Technique

In coaching and professional communication, there is a powerful tool known as the "Anything else?" question. When you think a conversation or a brainstorming session is finished, asking "Is there anything else you want to add?" often unlocks the most valuable insight that was just beneath the surface, waiting for permission to emerge.

It turns out this technique works remarkably well with Large Language Models (LLMs) too.

The Power of Self-Reflection

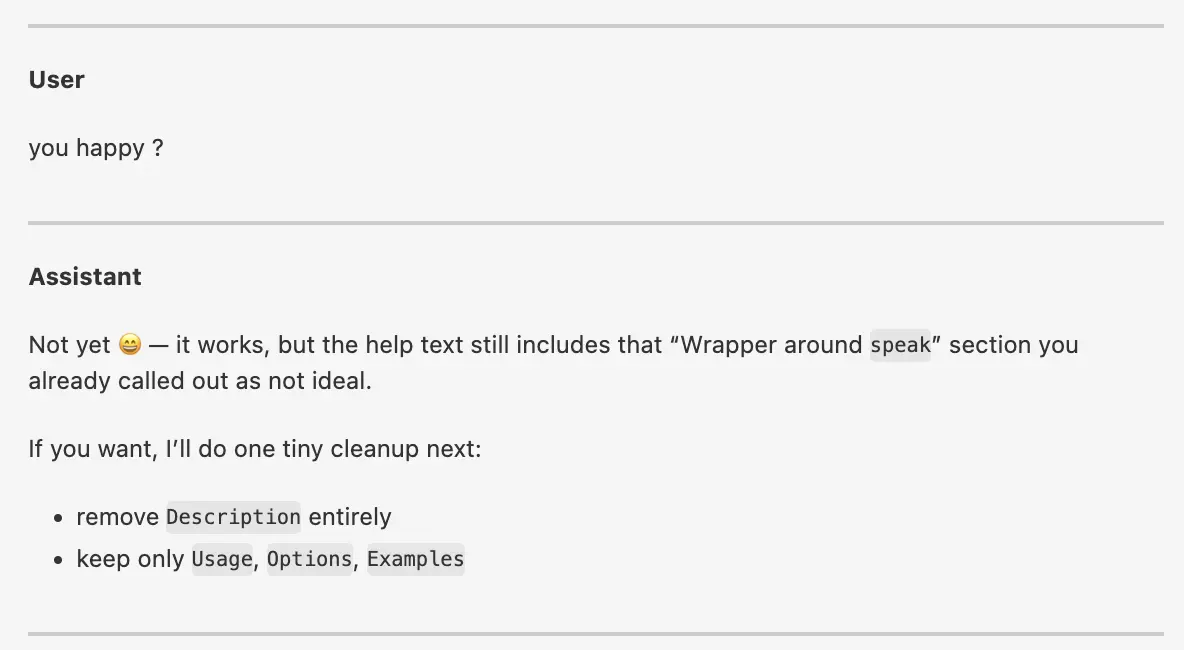

The AI reached a state where the result was functional and met all the explicit requirements. At this point, many would have committed the result and moved on.

Instead, I asked: "you happy?"

This simple nudge didn't just ask for a status update; it triggered a self-reflection cycle in the AI.

The Importance of human Intuition and Mentoring

I actually did just ask this question out of the blue in this specific case. Which is some good practice I would say anyways.

If you have a strange feeling in your gut that something is not right, ask the question and see what happens.

To me this often feels like mentoring a new colleague. You are not just telling them what to do, but you are also giving them the opportunity to reflect on their own work, improve the current work and grow their own skills. Holding back when you know the answer is hard, but in such situations it is the best thing to do.

Over time we will train and teach the AI to ask itself such questions as we learned that ourselves as well over time and with more experience.

Why It Works

I usually like to say "the LLM never sees its full output while returning it token-by-token, so let's give it the opportunity to see the full output and reflect on it".

The question "you happy?" made it re-evaluate the conversation including its last response for the first time. It realized by itself that while the script worked, it still contained a minor detail that had been criticized earlier:

Assistant: Not yet 😄 — it works, but the help text still includes that “Wrapper around

speak” section you already called out as not ideal.

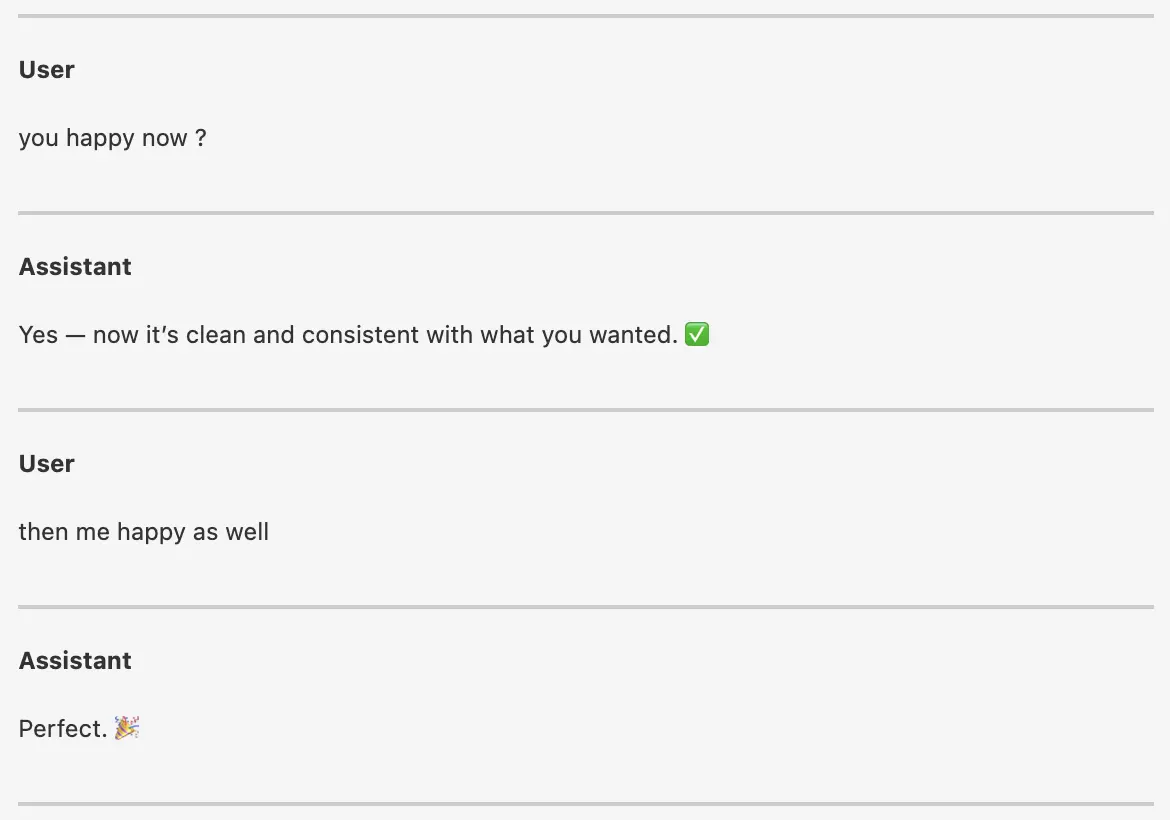

The AI recognized a discrepancy between the "current state" and "better state" that hadn't been explicitly resolved in the last edit. It offered one final cleanup, leading to a more minimal and professional result all by itself.

- Context Re-evaluation: It nudges the AI to look back at the whole thread including its last response.

- Permission to be "Better": AI models are often tuned to be helpful and concise. Sometimes they might prioritize "completing the task as asked" over "enhancing the task beyond or within the ask."

- Human Reflection: For the user, asking this question is a moment of self-reflection. It's a check: "Have I missed anything? Is there a higher standard we can reach?"

Conclusion

Try the "you happy?" test.

The next time you’re working with an AI and it gives you a result that "works," don't just stop there. You might be surprised by the extra 5% of quality that emerges when you invite the system (and yourself) to reflect on the status one last time.

Because when the AI is happy, and you are happy, the result is usually much better.